Posts filed under ‘Evaluation tools (surveys, interviews..)’

Implications of COVID-19 on evaluation

The ILO have published useful guidelines on “Implications of COVID-19 on evaluations in the ILO: Practical tips on adapting to the situation“. The guidelines are well worth a read as they can provide guidance for many of us carrying out evaluations remotely these days.

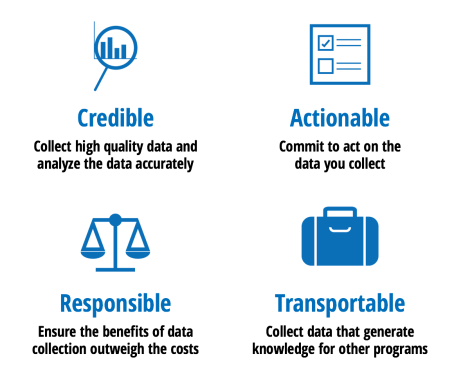

CART principles for monitoring

Innovations for Poverty Action have developed some useful guidance on activity monitoring and evaluation based on their own CART principles: credible, actionable, responsible, and transportable (see summary graphic below).

Particularly useful for those interested in monitoring which is an issue many organisations find challenging. Read more here>>

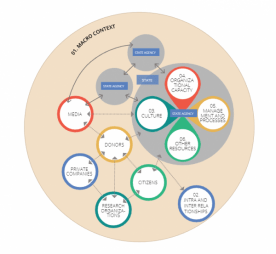

A framework for context analysis

Here is an interesting tool to help with context analysis: ‘Context Matters’ framework – to support evidence-informed policy making. The tool is interactive and you can view the different elements from various perspectives. Designed to support the use of knowledge in policy-making, it could also be of interest to researchers and evaluators as an analytical tool for contexts.

View the interactive framework here>>

Read more about the framework here>>

Thanks to Better Evaluation for introducing this new resource to me.

New e-learning course: Real-time evaluation and adaptive management

My friends at TRAASS have launched a new e-learning course on Real-time evaluation and adaptive management:

“What exactly is an RTE/AM approach and how can it help in unstable or conflict affected situations? Do M&E practitioners need to ditch their standard approaches in jumping on this latest bandwagon? What can you do if there is no counterfactual or dataset? This modular course covers these challenges and more.”

Tips for young / emerging evaluators

The Evaluation for Development blog from Zenda Ofir has been collating tips for young / emerging evaluators – that even experienced evaluators will find interesting. Here are some highlights:

The Evaluation for Development blog from Zenda Ofir has been collating tips for young / emerging evaluators – that even experienced evaluators will find interesting. Here are some highlights:

From Zenda herself:

Top Tip 1. Open your mind. Read

Top Tip 2. Be mindful and explicit about what frames and shapes your evaluative judgments.

Top Tip 3. Be open to what constitutes “credible evidence”.

Top Tip 4. Focus a good part of your evaluative activities on “understanding”.

Top Tip 5. Be or become a systems thinker who can also deal with some complexity concepts.

Read more about these tips>>

From Juha Uitto:

Top Tip 1. Think beyond individual interventions and their objectives.

Top Tip 2. Understand, deal with and assess choices and trade-offs made or that should have been made.

Top Tip 3. Methods should not drive evaluations.

Top Tip 4. Think about our interconnected world, and implore others to do the same.

Read more about these tips>>

From Benita Williams:

Top Tip 1. The cruel tyranny of deadlines.

Top Tip 2. Paralysis from juggling competing priorities.

Top Tip 3. Annoyance when you are the messenger who gets shot at

Top Tip 4. Working with an evaluand that affects you emotionally

Top Tip 5. Feeling rejected if you do not land an assignment

Top Tip 6. Feeling demoralized when you work with people who do not understand evaluation

Top Tip 7. Feeling discouraged because of wasted blood sweat and tears

Top Tip 8. Feeling lazy if you try to maintain work-life balance when other consultants seem to work 24/7

Top Tip 9. Feeling overwhelmed by all of the skills and knowledge you should have

Read more about these tips>>

And from Michael Quinn Patton, just one tip:

Top tip 1: Steep yourself in the classics.

Read more about this tip>>

Using Sankey diagrams for data presentation

I’ve always found the Sankey diagram an illustrative way to show transfers from inputs to outputs but have never found a use for them in my own work until now…

The following Sankey diagram shows research reports on crises on the left and the number of challenges identified (for humanitarian surge response) per crisis. The right shows the categories used to group the challenges (“Resource gaps, Policies and systems”, etc). This provides a visual overview of the challenges identified and their volume by crisis and type of challenge.

I produced this diagram using a free online tool. If interested in the research reports, they can be found here.

New resource: Contribution Analysis – assessing advocacy influence

As a long time fan of contribution analysis for advocacy evaluation, I was very interested to see this new handbook on Contribution Analysis in Policy Work – Assessing Advocacy’s Influence (pdf). The handbook takes you the steps of contribution analysis with examples provided.

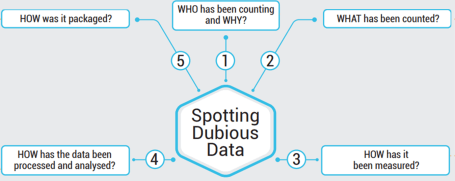

Spotting dubious data

ACAPS has produced a great poster on “Spotting Dubious Data”. They make reference to humanitarian action but it applies across all sectors. Below is a simplified version of the poster.

I think particularly point 1. is often ignored when looking at data – WHY was this data collected…

Participatory tools for M&E

ActionAid has just released an online toolbox and platform focused on participatory tools and processes for monitoring and evaluation.

ActionAid has just released an online toolbox and platform focused on participatory tools and processes for monitoring and evaluation.

Check out the Tools page that features some 80 participatory tools.

Here is a description of the toolbox from ActionAid:

The Reflection-Action Toolbox is an online platform which enable people to connect around how participatory tools and processes are used in practice. The aim is to create a global community of practice and provide an opportunity for M&E practitioners to access range of participatory tools, promote shared learning about added value of these tools, challenges faced, adaptations and innovations made in different contextual realities where applied.

Image from the Power Flower tool!

Event – The Future of Technology for Evaluation

A very interesting event is scheduled for February 20-21 2017 in London; the Future of technology for monitoring, evaluation, research and learning – MERL TECH; learn more about the event>>