Posts filed under ‘Training evaluation’

New book: Monitoring and Evaluation Training: A Systematic Approach

An under-appreciated area has been what is a systematic approach to monitoring and evaluation (M&E) training for programs and projects. Now this gap has been filled with a new book from Scott Chaplowe and J. Bradley Cousins:

An under-appreciated area has been what is a systematic approach to monitoring and evaluation (M&E) training for programs and projects. Now this gap has been filled with a new book from Scott Chaplowe and J. Bradley Cousins:

Monitoring and Evaluation Training: A Systematic Approach

“Bridging theoretical concepts with practical, how-to knowledge, authors Scott Chaplowe and J. Bradley Cousins draw upon the scholarly literature, applied resources, and over 50 years of combined experience to provide expert guidance for M&E training that can be tailored to different training needs and contexts, from training for professionals or non-professionals, to organization staff, community members, and other groups with a desire to learn and sustain sound M&E practices.”

2 day Course in Most Significant Change Technique, London, June 10-11, 2013

For those who are looking for insights into a participatory M&E method, this may be of interest:

A two day course on the Most Significant Change (MSC) technique – a participatory monitoring & evaluation technique ideally suited to providing qualitative information on project /programme impact.The course offers several practical exercises and provides insights into other real world examples of MSC use.

Date: June 10-11, 2013

Venue: London, NCVO National Council for Voluntary Organisations

Society Building, 8 All Saints Street, London (near Kings Cross

Presenters: Theo Nabben with secondary input from Rick Davies

Costs: £ 450 per person for 2 days inclusive of meals. Please note: no scholarships are available.

Accommodation and transport are participants own responsibility. Course notes and electronic files will be provided. 10% discount to members of German and UK evaluation societies.

For course information details contact Theo at theo@socialimpactconsulting.com.au or through their website>>

Going beyond standard training evaluation

During the recent European Evaluation Conference, I saw a very interesting presentation on going beyond the standard approach to training evaluation.

Dr. Jan Ulrich Hense of LMU München presented his research on “Kirkpatrick and beyond: A comprehensive methodology for influential training evaluations” (view Dr Hense’s full presentation here).

As I’ve written about before (well in a post four years ago…) , Donald Kirkpatrick developed a model for training evaluation that focused on evaluating four levels of impact:

1. Reaction

2. Learning

3. Behavior

4. Results

Dr Hense provides a new perspective – we could say an updated approach – to this model. Even further, he has tested his ideas with a real training evaluation in a corporate setting.

I particularly like how he considers the “input” aspect (e.g. participants’ motivation) and the context of the training (which can be very important to influence its outcomes).

View Dr Hense’s presentation on his website.

Measuring long term impact of conferences

Often we evaluate conferences with their participants just after the conferences, measuring mostly reactions and learnings, as I’ve written about previously.

Wouldn’t it be more interesting actually to try and measure the longer term impact of a conference? This is what the International AIDS Society has done concerning one of its international conferences – measuring longer term impact 14 months after the conference – you can view the report (pdf) here.

Their overall assessment of impact was as follows:

“AIDS 2008 had a clear impact on delegates’ work and on their organizations, and that the conference influence has extended far beyond those who attended, thanks to networking, collaboration, knowledge sharing and advocacy at all levels.”

network mapping tool

As regular readers will now, I am interested in network mapping and have undertaken some projects where I have used network mapping to assess networks that have emerged as a result of conferences.

Here is quite an interesting tool, Net-Map, an interview-based mapping tool. The creators of this tool state that it is a “tool that helps people understand, visualize, discuss, and improve situations in which many different actors influence outcomes”.

Read further about the tool and view many of the illustrative images here>>

Glenn

Event scorecard

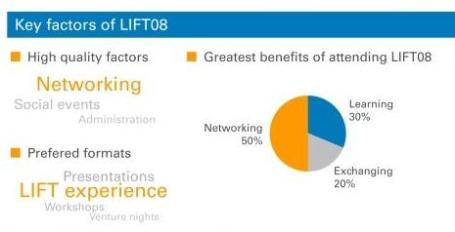

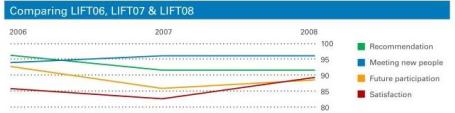

In the work I do to evaluate conferences and events, I have put together what I believe is a “neat” way of displaying the main results of an evaluation: an event scorecard. In the evaluation of a conference that occurs every year in Geneva, Switzerland, the LIFT conference, the scorecard summarises both qualitative and quantitative results taken from the survey of attendees. Above you can see a snapshot of the scorecard.

As I have evaluated the conference for three years now, we were also able to show some comparative data as you can see here:

If you are interested, you can view the full scorecard by clicking on the thumbnail image below:

And for the really keen, you can read the full evaluation report of the LIFT08 evaluation report (pdf).

Greetings from Tashkent, Uzbekistan from where I write this post. I’m here for an evaluation project and off to Bishkek, Kyrgyzstan now.

Glenn

Perceptions of evaluation

I’ve just spent a week in Armenia and Georgia (pictured above) for an evaluation project where I interviewed people from a cross section of society. These are both fascinating countries, if you ever get the chance to visit… During my work there, I was wondering – what do people think about evaluators? For this type of in-site evaluation, we show up, ask some questions – and leave – and they may never see us again.

From this experience and others I’ve tried to interpret how people see evaluators – and I believe people see us in multiple ways including:

The auditor: you are here to check and control how things are running. Your findings will mean drastic changes for the organisation. Many people see us in this light.

The fixer: you are here to listen to the problems and come up with solutions. You will be instrumental in changing the organisation.

The messenger: you are simply channelling what you hear back to your commissioning organisation. But this is an effective way to pass a message or an opinion to the organisation via a third party.

The researcher: you are interested in knowing what works and what doesn’t. You are looking at what causes what. This is for the greater science and not for anyone in particular.

The tourist: you are simply visiting on a “meet and greet” tour. People don’t really understanding why you are visiting and talking to them.

The teacher: you are here to tell people how to do things better. You listen and then tell them how they can improve.

We may have a clear idea of what we are trying to do as evaluators (e.g. to assess results of programmes and see how they can be improved), but we also have to be aware that people will see us in many different ways and from varied perspectives – which just makes the work more interesting….

Glenn

Hints on interviewing for evaluation projects

Evaluators often use interviews as a primary tool to collect information. Many guides and books exist on interviewing – but not so many for evaluation projects in particular. Here are some hints on interviewing based on my own experiences:

1. Be prepared: No matter how wide-ranging you would like an interview to be, you should as a minimum note down some subjects you would like to cover or particular questions to be answered. A little bit of structure will make the analysis easier.

2. Determine what is key for you to know: Before starting the interview, you might have a number of subjects to cover. It may be wise to determine what is key for you to know – what are the three to four things you would like to know from every person interviewed? Often you will get side-tracked during an interview and later on going through your notes you may discover that you forgot to ask about a key piece of information.

3. Explain the purpose: Before launching into questions, explain in broad terms the nature of the evaluation project and how the information from the discussion will be used.

4. Take notes as you discuss: Even if it is just the main points. Do not rely on your memory as after you have done several interviews you may mix up some of the responses. Once the interview has concluded try to write further on the main points raised. Of course, recording and then transcribing interviews is recommended but not always possible.

5. Take notes about other matters: It’s important also to note down not only what a person says but how they say it – you need to look out for body language, signs of frustration, enthusiasm, etc. Any points of this nature I would normally note down at the end of my interview notes. This is also important if someone else reads your notes in order for them to understand the context.

6. Don’t offer your own opinion or indicate a bias: Your main role is to gather information and you shouldn’t try to defend a project or enter into a debate with an interviewee. Remember, listening is key!

7. Have interviewees define terms: If someone says “I’n not happy with the situation”, you have understood that they are not happy but not much more. Have them define what they are not happy about. It’s the same if an interviewew says “we need more support”. Ask them to define what they mean by “support”.

8. Ask for clarification, details and examples: Such as “why is that so?”, “can you provide me with an example?”, “can you take me through the steps of that?” etc.

Hope these hints are of use..

Glenn

The path from outputs to outcomes

Organisations often focus on evaluating the “outputs” of their activities (what they produce) and not on “outcomes” (what their activities actually achieve), as I’ve written about before. Many international organisations and NGOs have now adopted a “results-based management” approach involving the setting of time-bound measurable objectives which aim to focus on outcomes rather than outputs – as outcomes are ultimately a better measure of whether an activity has actually changed anything or not.

Has this approach been successful? A new report from the UN (of their development agency – UNDP) indicates that the focus is still on outputs rather than outcomes as the link between the two is not clear, as they write:

“The attempt to shift monitoring focus from outputs to outcomes failed for several reasons…For projects to contribute to outcomes there needs to be a convincing chain of results or causal path. Despite familiarity with tools such as the logframe, no new methods were developed to help country staff plan and demonstrate these linkages and handle projects collectively towards a common monitorable outcome.”

(p.45)

Interestingly, they highlight the lack of clarity in linking outputs to outcome – to show a causal path between the two. For example, the difficulty in showing that something that I planned for and implemented (e.g. a staff training program – an output) led to a desirable result (e.g. better performance of an organisation – an outcome).

One conclusion we can make from this study is that we do need more tools to help us establish the link between outputs and outcomes – that would certainly be a great advance.

Read the full UN report here >>

Glenn

Seven tips for better email invitations for web surveys

![]()

Further to my earlier post on ten tips for better web surveys, the email that people receive inviting them to complete an online survey is an important factor in persuading people to complete the survey – or not. Following are some recommended practices and a model email to help you with this task:

1. Explain briefly why you want an input: it’s important that people know why you are asking their opinion or feedback on a given subject. State this clearly at the beginning of you email, e.g. “As a client of XYZ, we would appreciate your feedback on products that you have purchased from us”.

2. Tell people who you are: it’s important that people know who you are (so they can assess whether they want to contribute or not). Even if you are a marketing firm conducting the research on behalf of a client, this can be stated in the email as a boiler plate message (see example below). In addition, the name and contact details of a “real” person signing off on the email will help.

3. Tell people how long it will take: quite simply, “this survey will take you some 10 minutes to complete”. But don’t underestimate – people do get upset if you tell them it will take 10 minutes and 30 minutes later they are still going through your survey…

4. Make sure your survey link is clickable: often survey softwares generate very long links for individual surveys. You can often get around this by masking the link, like this “click to go to survey >>“. However, some email systems do not read correctly masked links so you may be better to copy the full link into the email as in the example below. In addition, also send your email invitation to yourself as a test – so you can click on your survey link just to make sure it works…

5. Reassure people about their privacy and confidentiality: people have to be reassured that their personal data and opinions will not be misused. A sentence covering these points should be found in the email text and repeated on the first page of the web survey (also check local legal requirements on this issue).

6. Take care with the “From”, “To” and “Subject”: If possible, the email address featured in the “From” field should be a real person. The problem will be if your survey comes from info@wizzbangsurveys.net it may end up in many people’s spam folders. For the “To”, it should contain an individual email only – we still receive email invitations where we can see 100s of email addresses in the “To” field – it doesn’t really instill confidence as to how your personal data will be used. The “Subject” is important also – you need something short and straight to the point (see example below). Avoid using spam-catching terms such as “win” or “prize”.

7. Keep it short: You often can fall into the trap of over explaining your survey and hiding the link somewhere in the email text or right at the bottom. Try and keep your text brief – most people will decide in seconds if they want to participate or not – and they need to be able to understand why they should, for whom, how long it will take and how (“Where is the survey link?!).

Model email invitation:

From: j.jones@xyzcompany.net

To: glenn.oneil@gmail.com

Subject: XYZ Summit 2008 – Seeking your feedbackDear participant,

On behalf of XYZ, we thank you for your participation in the XYZ Summit.

We would very much appreciate your feedback on the Summit by completing a brief online survey. This survey will take some 10 minutes to complete. All replies are anonymous and will be treated confidentially.

To complete the survey, please click here >>

If this link does not work, please copy and paste the following link into your internet window:

http://optima.benchpoint.com/optima/SurveyPop.aspx?query=view&SurveyID=75&SS=0ZJk1RORbThank you in advance; your feedback is very valuable to us.

Kind regards,

J. Jones

Corporate Communications

XYZ Company

email: j.jones@xyzcompany.net

tel: ++ 1 123 456 789****

Benchpoint has been commissioned by XYZ to undertak this survey. Please contact Glenn O’Neil of Benchpoint Ltd. if you have any questions: oneil@benchpoint.com

The following article from Quirks Marketing Research Review also contains some good tips on writing email invitations.

Glenn