Posts filed under ‘Web metrics’

Top metrics for social media

Like many, you may be confused as to what you should measure on the social media platforms you are using for your communications.

Like many, you may be confused as to what you should measure on the social media platforms you are using for your communications.

Well, Katie Delahaye Paine, aka The Measurement Queen, has offered her valuable advice on the top five social media metrics you should be measuring:

- Net increase in share of desirable conversation

- Top five performing pieces of content, measured by conversion

- Percentage increase in conversions

- Net growth in high quality engagement

- Cost-effectiveness comparison

I really like the focus on engagement; read more here (pdf) where Katie explains each metric for you.

Beyond online vanity metrics

Here is a very interesting study (pdf) from the Mobilisation Lab on what counts and doesn’t for online metrics and campaigns.

The study looks at what they call “vanity metrics” for online campaigns that they define as “data that are easily manipulated, are biased toward the short-term, often paint a rosy picture of program success, or do not help campaigners make wise strategic decisions”. Examples of vanity metrics include: number of petition signatures; web traffic, number of “opens” (of emails I guess).

So what do they recommend campaigns should be measuring?

They have plenty of good suggestions and insights. Here are some of the metrics they mentioned that could be more significant (and possible to measure online):

- Monthly members returning for action

- Actions per member (rather than size of lists)

- Number of members actively part of a campaign

A pragmatic guide to monitoring and evaluating research communications using digital tools

The approach taken relates online measurement tools to four levels of assessing influence of communications on policy (an aim of research communications):

- Management, outputs, uptake, outcomes and impact.

The last level, outcomes and impact is of course the hardest to measure with digital tools. But I think if you have access to your target audiences, this can be done through in-depth interviews or more simply through email surveys to ask how they have used the research products – which can give then provide an indication of the role they have taken in influencing policy.

Measuring success in online communities – part 2

Further to my earlier post on measuring online communities, I had the opportunity last weekend to present a module on this subject to a group of students following the SAWI diploma on “Spécialiste en management de communautés & médias sociaux”.

The slides used for this presentation are found below – they are in French – English translation will come….soon!

Employee engagement is cool. Employee surveys are not

Using Google Analytics to track the relative value of your Offer

Some lessons for the communications Evaluation profession

It is some time since I looked at my Google Analytics account. A pity, because it can reveal some dramatic insights into global trends. And the quality and mine-ability of the data is improving month by month.

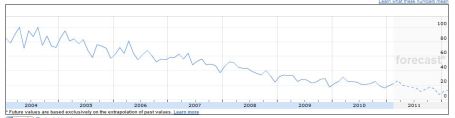

I wanted to see what was happening in Benchpoint’s main market place, which is specialist on line surveys of employee opinion in large companies. So I looked up “employee surveys”. I was surprised (and shocked) to see that Google searches for this had declined since their peak in 2004 to virtual insignificance.

This was worrying, because our experience is that the sector is alive and well, with growing competition.

On the whole, we advise against general employee surveys, preferring surveys which gain insight into specific areas.

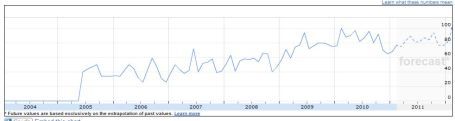

So I contrasted this with a search for “Employee Engagement”, on its own. The opposite trend! This search term has enjoyed steady growth, with the main interest coming from India, Singapore, South Africa, Malaysia, Canada and the USA, in that order.

“Employee engagement surveys”, which first appeared in Q1 2007, also shows a contrarian trend, with most interest coming from India, Canada, the UK and the USA.

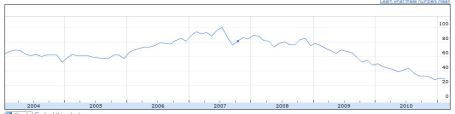

Looking at the wider market, here is the chart for the search term “Surveys” – a steady decline since 2007

But contrast this with searches for “Survey Monkey”

Where is all this leading us? Google is remarkably good are recording what’s cool, and what’s not in great detail and in real time. There are plenty of geeks out there who earn good money doing it for the big international consumer companies. And what it tells us is that, more than ever, positioning is key.

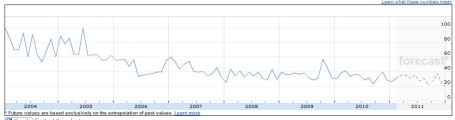

Our own field, “ Communications Evaluation” is fairly uncool. Maybe we need to invent a new sexy descriptor for what we do?

But note, on the chart below, the peaks in the autumn of 2009 and 2010, when the AMEC Measurement Summits were held. Sudden spikes in interest.

This blog and Benchpoint have the copyright of “Intelligent measurement”, which is holding its own in the visibility and coolness stake – with this blog giving a boost way back in 2007…

Conclusions:

- Get a Google Analytics account and start monitoring the keywords people are using to seach for your business activity and adapt your website accordingly

- As an interest group/profession, we probably need to adopt a different description of what we do if we wish to maintain visibility and influence. Suggestions anyone? Discuss!

Sorry for such a long post!

Richard

Measuring success in online communities

At the Lift conference this week in Geneva, I heard a lot of speakers mention the need to measure and evaluate how online tools are being used, for what purpose and with what impact (about time!).

One speaker, Tiffany St James spoke on “How to encourage involvement in online communities”. The above illustration shows the main aspects of her presentation, where she suggested some key performance indicators for measuring online communities, notably:

Outputs: how many visits, referrals, subscribers, loyalty, web analytics, bounce rates

Outtakes: messages and experience for user satisfaction, measuring change of attitude

Outcomes: action-what do you want the user to do?

You can view a video of Tiffany’s presentation here>>

(illustration fabulously done by Sabine Soeder of Alchemy).

Web analytics and communications evaluation

When evaluating a communications project, I often consider the web metrics aspect of the project, if a website played an important part in the project. Web metrics are statistics generated by tools that measure website traffic, such as how many people visited a web page, where did they come from, etc.

Seth Duncan has recently produced for the US-based Institute for PR a very interesting paper on this subject:

The paper focuses on the aspect of referral (e.g. which is the most “efficient” source of traffic for a website) but also contains some intruiging descriptions of advanced statistical methods for web analytics.

Evaluating online communication tools

Online tools, such as corporate websites, members’ directories or portals increasingly play an important role in communications’ strategies. And of course, they are increasingly important to evaluate.

I just concluded an evaluation of an online tool, created to facilitate the exchange of information amongst a specific community. The tool in question, the Central Register of Disaster Management Capacities is managed by the United Nations Office for the Coordination of Humanitarian Affairs.

The evaluation methodology that I used for evaluating this online tool is interesting as it combines:

- Content analysis

- Network mapping

- Online survey

- Interviews

- Expert review

- Web metrics

And for once, you can dig into the methodology and findings as the evaluation report is available publicly: View the full report here (pdf) >>

Communications evaluation – 2009 trends

Last week I gave a presentation on evaluation for communicators (pdf) at the International Federation of Red Cross and Red Crescent Societies. A communicator asked me what trends had I seen in communications evaluation, particularly relevant to the non-profit sector. This got me thinking and here are some of the trends I have seen in 2008 that I believe are an indication of some directions in 2009:

Measuring web & social media: as websites and social media increasingly grow in importance for communication programmes, so to is the necessity to have the capacity to measure what their impact is. Web analytics has grown in importance as will the ability to measure social media.

Media monitoring not the be-all and end-all: after many years of organisations only focusing on media monitoring as the means of measuring communications, there is finally some realisation that media monitoring is an interesting gauge of visibility but not more. Organisations are now interested more and more in having some qualitative analysis of data collected (such as looking at how influential the media are, the tone and the importance).

Use of non-intrusive or natural data: organisations are also now considering “non-intrusive” or “natural” data – information that already exists – e.g. blog / video posts, customer comments, attendance records, conference papers, etc. As I’ve written about before, this data is underated by evaluators as everyone rushes to survey and interview people.

Belated arrival of results-based management: Despite existing for over 50 years, results-based management or management by objectives is just arriving in many organsations. What does this mean for communicators? It means that at the minimum they have to set measurable objectives for their activities – which is starting to happen. They have no more excuses(pdf) for not evaluating!

Glenn

Key performance indicators for non-profit websites

i’m just back from my first Web Analytics Wednesday (that’s their logo above), held here in Lausanne, Switzerland. I’m interested in web analytics (as I’ve written about before) as it is can be a key component in measuring communications activities today.

The focus of this get-together was on Key Performance Indicators – and in particular KPI for non-profit websites. Here are some of the KPI suggested:

– Bounce rate – number of persons visiting only one page compared to number of people visiting more than one page or vice-versa

– Length of visit (time) – compared to same time last year/month/week

– Depth of visit (number of pages) – compared to same time last year/month/week

And quite some interesting KPI for search:

– Number of visitors using search

– Average number of searches per visit

– % of zero search results (my favorite -high % means people don’t find what they are looking for!)

These are all interesting IKP to think about in monitoring website usage – it also is a good addition to the standard measures usually looked at (e.g. number of visitors and page views).

As we discussed during the get-together, some of these IKP need to be taken in the particular context. Take for example “depth of visit” (average number of pages viewed per visitor). A lot of pages viewed can be both positive and negative. It can mean that someone is really doing some in-depth browsing – or it can mean that someone doesn’t find what they want and is clicking everywhere on the site. A solution was suggested by the WAW moderator, Jmarc Vandenabeele – combine both depth and length (time) of visits. If you have short visits with a lot of pages viewed it could be negative (sign that visitors are clicking on many pages in a short time to find something) whereas long visits with a lot of pages viewed could be more positive.

Glenn