Posts filed under ‘communicating evaluation results’

Integrating communications in evaluation

Integrating communications into the evaluation has been an interest of mine since I left my work as a communicator to take on the topsy-turvy world of evaluation – and I have recently contributed a chapter to the Evaluation Handbook on this subject: “Communicating with Interest Holders“.

I’ve also summarised the main points of this chapter in this presentation.

Using video for evaluation

When thinking about communicating evaluation findings, an often overlooked medium is to use video, as Better Evaluation says “When produced well, videos provide an excellent means to convey messages coming out of an evaluation.”

Creating a short video can be an excellent way to communicate key findings to audiences. But they do require some preparation and planning, notably:

– Think about what will be the topic and story of the video – what messages of the evaluation findings will the video focus on?

– A video is a great opportunity for stakeholders and beneficiaries to speak up and have their voices heard – of course you need their consent to use their images / voices.

– Think of what images can illustrate well the intervention being evaluated – as it is a visual medium! For example, if it is a community-based activity, film the activity and the community.

-These days you don’t need a big budget and expensive equipment – you can film a lot on a smart phone – but if you are not skilled in editing, a small budget for editing would be needed.

Also important is that the evaluation commissioner must be onboard – as ideally the evaluation team would do some filming during data collection. The challenge for the evaluation team is that is rarely in an evaluation ToR to do a video – the team has to be bold and propose it!

I’ve had the chance to use video several times for evaluation and would always like to do more.

Here is an example where we had a simple approach; filming testimonies of staff for an evaluation of an intervention for the INGO WaterAid – then weaving them together with a narrative – all under 6 minutes:

And in another example, we used graphics, interviews and testimonies for a climate change evaluation to present a comprehensive overview of the evaluation findings:

And with nearly a 1000 views on YouTube, it’s certainly more than read the report!

Here is a more professionally produced example from the Global Environment Facility; a great summary of the evaluation findings in under 5 minutes:

For further tips and hints for using video in evaluation, read this post from Better Evaluation.

New guide: Equitable Communications Guide for Evaluators

The Equitable Communications Guide is a great new resource for evaluators. Developed by Innovation Network, the Equitable Communications Guide is designed for evaluators in the social sector, but has relevant lessons for anyone looking to improve their communications! The guide explores how to communicate equitably, center the experiences of others, and convey the meaning behind key messages. Download the free guide here: https://innonet.org/news-insights/resources/equitable-communications-guide/

New resource Integrating Communications in Evaluation on Better Evaluation

I am happy to announce that my guidelines “Integrating communications in evaluation” is now available of the Better Evaluation website. If you haven’t visited Better Evaluation, I suggest you do; it’s full of useful resources and helpful advice.

Integrating communications in evaluation – presentation slides

Earlier this year I gave a presentation on “integrating communications in evaluation” and I am now happy to share the presentation slides of the event:

Event: Integrating communications in evaluation – 11h00 – 30 January 2020 ILO, Geneva

For those in Geneva region and interested in communications and evaluation – I will be making a presentation in January 2020, read more:

The Evaluation Office of the International Labour Organization invites you to a presentation by Glenn O’Neil . The topic of Dr O’Neil’s presentation will be Integrating communications in evaluation.

. The topic of Dr O’Neil’s presentation will be Integrating communications in evaluation.

Communications is an important aspect of evaluation; it has been said that without communications, evaluation would not be possible.

Evaluation commissioners and evaluators are already communicating – but is communications being used optimally to support the evaluation process? In this presentation, Dr O’Neil will challenge the assumptions of how communications “works” for evaluations and propose solutions based on his experience as both a communicator and evaluator, backed up by communication practice and theory.

Dr O’Neil is founder of Owl RE, evaluation and research consultancy, Geneva. Since 15 years, he has led over 100 evaluations and reviews for some 40 organizations, including UN agencies, NGOs, foundations and governments. Dr O’Neil was previously a professional communicator in the non-profit sector and has produced his own guide on Integrating Communications in the Evaluation Process (pdf).

The event will take place from 11:00-12:00 on Thursday 30 January in the ILO Library on R2 (main floor). After the presentation, there will be a networking lunch (at your own expense) in the ILO cafeteria.

No need to register – please come to the ILO reception at 10:50 and ask for Craig Russon of the ILO Evaluation Office.

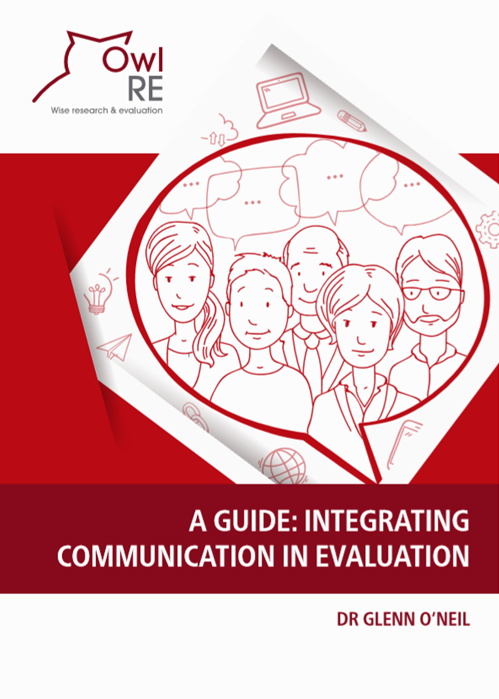

A Guide: Integrating Communication in Evaluation

I’ve put together this guide for anyone who wants to learn how communication can more effectively support evaluation: evaluation consultants, communication consultants, evaluation commissioners and programme/project staff participating in evaluations.

I’ve put together this guide for anyone who wants to learn how communication can more effectively support evaluation: evaluation consultants, communication consultants, evaluation commissioners and programme/project staff participating in evaluations.

Using infographics to present evaluation findings

I’ve written previously about using infographics to summarise evaluation findings; here is another recent example of where my evaluation team used an infographic to present the findings of an evaluation – it’s only a partial view – you can see the complete infographic on page 5 of this report (pdf).

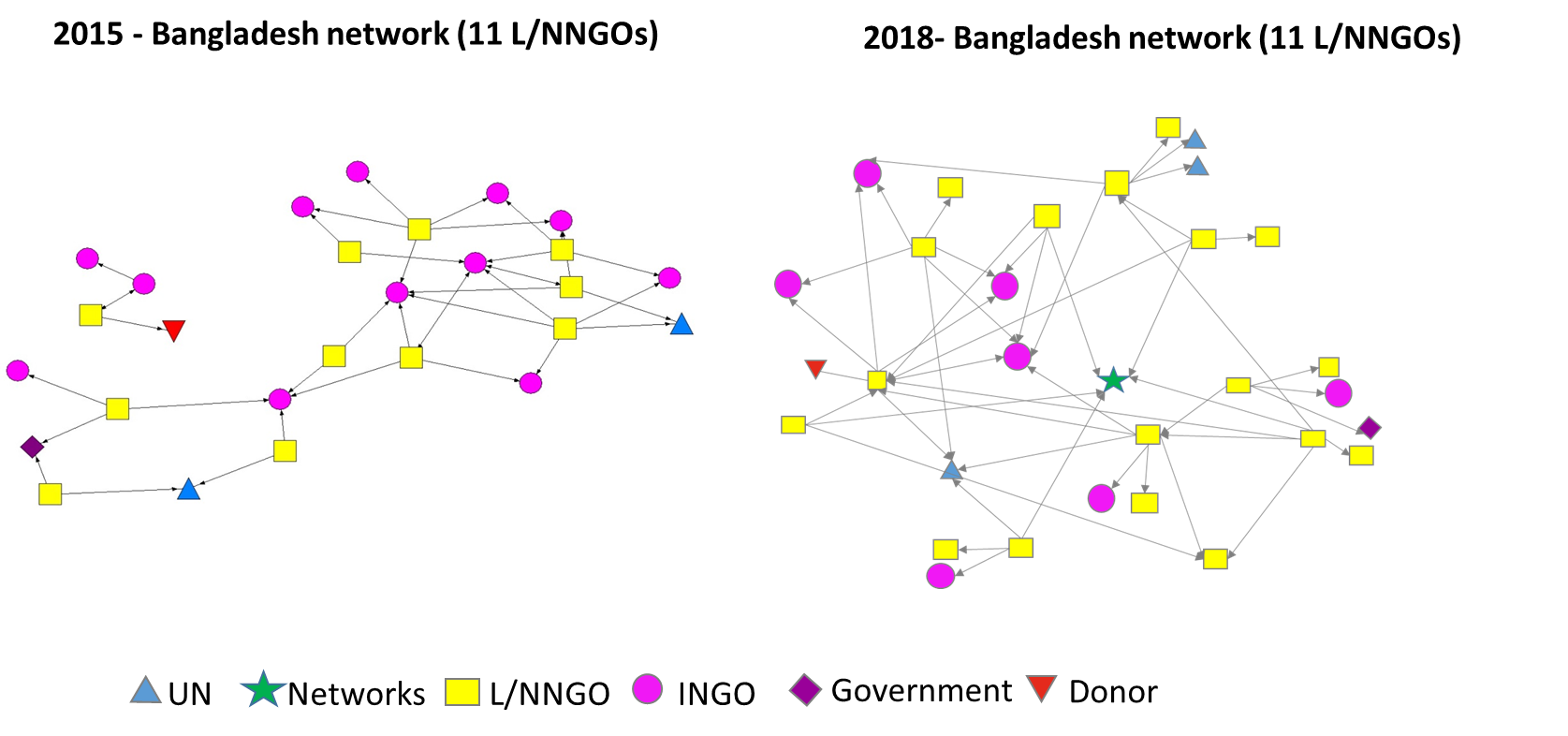

Networking mapping as an evaluation tool

I’ve posted previously about network mapping as an evaluation tool and recently I had the opportunity to use network mapping for an evaluation.

In an evaluation of the Shifting the Power project we were interested to see how local networks of NGOs had grown over the three years of the project. We were lucky that the project had carried out a mapping of NGO networks at the start of the project in 2015 and we then did the same in early 2018; here you can see the results comparing 2015 to 2018 from Bangladesh – interesting data!

You can view the full evaluation report here (pdf)>>

Infographics to present evaluation findings

I’ve posted previously about using infographics to summarise evaluation findings; here is a recent example of using an infographic to present research results (click on the image to see larger and complete version); admittedly we packed a lot into this infographic – but still a good summary!